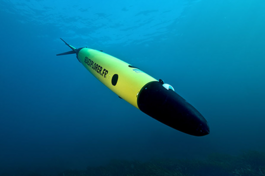

A Powerhouse of Performance in an Ultra-Small Form Factor

The military and aerospace market maintains an insatiable appetite for smaller, lighter, and cheaper. Recent technological breakthroughs in SWaP-C reduction have yielded the introduction of USFF mission computer and networking systems.

Simplifying the Challenge of Expeditionary Communications

This white paper presents a strategic framework for addressing obstacles to efficient communications and how best to maintain seamless connectivity through the utilization of open standards-based, field-proven Curtiss-Wright hardware and software.

Integrated Stabilization Systems Reduce Risk and Accelerate Time to Market

When each component in the drive system is purpose-built for aiming and stabilization, integrated by experienced engineers, and proven in numerous field applications, system integrators can rest easy knowing their solutions will meet performance requirements

Securing Data with Quantum Resistant Algorithms: Implementing Data-at-Rest and Data-in-Transit Encryption Solutions

Review the challenges for deploying QR encryption and how to implement DAR and data-in-transit QR encryption solutions using commercial FPGA devices and off-the-shelf hardware.

Optimizing Army Vehicle Interoperability with Open Architecture

The goal of reducing the cost of equipping Army vehicles with new capabilities, while adding increasing interoperability and flexibility of operation, can be accomplished through the use of a Modular Open Systems Approach (MOSA), a technical and business strategy for designing an affordable and adaptable system.